How to Compute Immediacy in Single Case Experimental Designs

Last updated: Apr 29, 2023

by Prathiba Natesan Batley, PhD | Professor, University of Louisville

It is often unclear in single case experimental designs (SCEDs) what indicates the treatment effect and what the best analysis is to estimate this. Among other things, data analytic problems could be attributed to the autocorrelated data, the antithesis of the independence-of-observations assumption. Readers may recall that most parametric statistical analyses require independence of observations. However, in single case designs the observation at a given time-point tends to be correlated to the observation at the previous time-point. This not only limits the data analytic techniques, but also confounds our understanding of whether the pattern in the data is because of a treatment effect, random variation, autocorrelation, or a combination of two or more of these.

What Works Clearinghouse Standards for SCEDs prescribe examining level, trend, variability, immediacy, and effect size while analyzing SCED data. Many effect sizes have been used to quantify SCED data, such as non-overlap of all pairs (NAP) (Parker & Vannest, 2009), percentage of data-points exceeding the median (PEM) (Ma, 2006), percentage of non-overlapping data (PND) (Scruggs et al., 1987), percentage of all non-overlapping data (PAND) (Parker et al., 2007), improvement rate difference (IRD) (Parker et al., 2007), small sample correction applied to standardized mean difference (Hedges et al., 2012; Hedges et al., 2013), and log of rate ratio (Pustejovsky, 2018). However, effect sizes are not what we are going to discuss here. Instead, we will focus on immediacy.

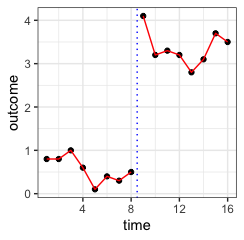

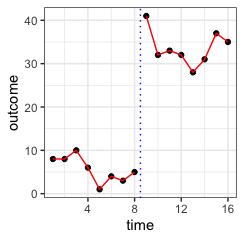

Immediacy is exhibited when the treatment immediately begins or stops taking effect when introduced or withdrawn, respectively. Classically, in visual analysis it is measured as the difference between the means or medians of the last and the first 3-5 observations in a phase and the next phase, respectively. However, there is no rule of thumb to interpret this immediacy index. For instance, consider the two plots in figure 1. For the figure on the left the immediacy index is 2.9 (i.e., median of (4, 3.2, 3.3) – median of (.4, .3, .5)), while that for the one on the right is 29. However, visually we can see that the separation between the two phases in both figures is relatively the same, indicating about the same kind of immediacy in treatment impact. This is the reason why the traditional computation of immediacy is not precise; in this case, with respect to scale.

Figure 1a: Small immediacy (dataset A)

Figure 1b: Large immediacy (dataset B)

Natesan & Hedges (2017) studied the quantification of immediacy using the Bayesian unknown change-point (BUCP) model. This model considers the transition point between the phases of the SCED as unknown, and estimates this parameter (i.e., a time-point) along with the means, standard deviations, and autocorrelations of the phases (i.e., of the outcome variable). When the mode of the posterior of the change-point (that is, the most frequently occurring estimate of change-point measured as a time-point) is the same as the time-point at which the treatment was introduced or withdrawn, there is evidence to support immediacy of the treatment effect. The credible interval (i.e., the analogue of a confidence interval in Bayesian inference) indicates the strength of this evidence of immediacy of the treatment effect. Natesan Batley et al. (2020) extended the BUCP model for a four-phase ABAB design using variational Bayesian estimation. The technical details of the models can be found in the cited papers, but what I want to show here is the results for both datasets illustrated in Figures 1a and 1b using the model.

The change-point is accurately estimated to have a posterior mean of 8 and a 95% credible interval of [8, 8] for both datasets. Immediacy, in other words, is estimated to be identical for both datasets. We see that the change-point is not affected by the scale of the data, indicating that the treatment in both conditions caused an immediate change in the outcome variable following its introduction. Credible intervals indicate that the evidence is strong. Thus, the BUCP model is a better alternative than the traditional method of estimating immediacy because the BUCP model allows the data to speak for themselves when the scale of a change in the outcome does not matter for drawing scientific, clinical, or otherwise practical conclusions.

References

Hedges, L. V., Pustejovsky, J. E., & Shadish, W. R. (2012). A standardized mean difference effect size for single case designs. Res Synth Methods, 3(3), 224-239. https://doi.org/10.1002/jrsm.1052

Hedges, L. V., Pustejovsky, J. E., & Shadish, W. R. (2013). A standardized mean difference effect size for multiple baseline designs across individuals. Research Synthesis Methods, 4(4), 324-341. https://doi.org/10.1002/jrsm.1086

Ma, H. H. (2006). An alternative method for quantitative synthesis of single-subject researches: percentage of data points exceeding the median. Behav Modif, 30(5), 598-617. https://doi.org/10.1177/0145445504272974

Natesan Batley, P., Minka, T., & Hedges, L. V. (2020). Investigating immediacy in multiple-phase-change single-case experimental designs using a Bayesian unknown change-points model. Behavior Research Methods, 52(4), 1714-1728. https://link.springer.com/content/pdf/10.3758/s13428-020-01345-z.pdf

Natesan, P., & Hedges, L. V. (2017). Bayesian unknown change-point models to investigate immediacy in single case designs. Psychological Methods, 22(4), 743.

Parker, R. I., Hagan-Burke, S., & Vannest, K. (2007). Percentage of All Non-Overlapping Data (PAND): An Alternative to PND. The Journal of Special Education, 40(4), 194-204. https://doi.org/10.1177/00224669070400040101

Parker, R. I., & Vannest, K. (2009). An improved effect size for single-case research: Nonoverlap of all pairs. Behavior Therapy, 40(4), 357-367. https://doi.org/10.1016/j.beth.2008.10.006

Pustejovsky, J. E. (2018). Using response ratios for meta-analyzing single-case designs with behavioral outcomes. J Sch Psychol, 68, 99-112. https://doi.org/10.1016/j.jsp.2018.02.003

Scruggs, T. E., Mastropieri, M. A., & Casto, G. (1987). The Quantitative Synthesis of Single-Subject Research. Remedial and Special Education, 8(2), 24-33. https://doi.org/10.1177/074193258700800206